Knowing what resonates best with your audience is often a guessing game. This is where A/B testing comes to play, by helping you optimise your website and recognise what works well and what doesn't.

Whether you're having difficulties reaching campaign goals or improving your current marketing strategy, A/B testing is an efficient tool for solving these challenges.

What is A/B testing?

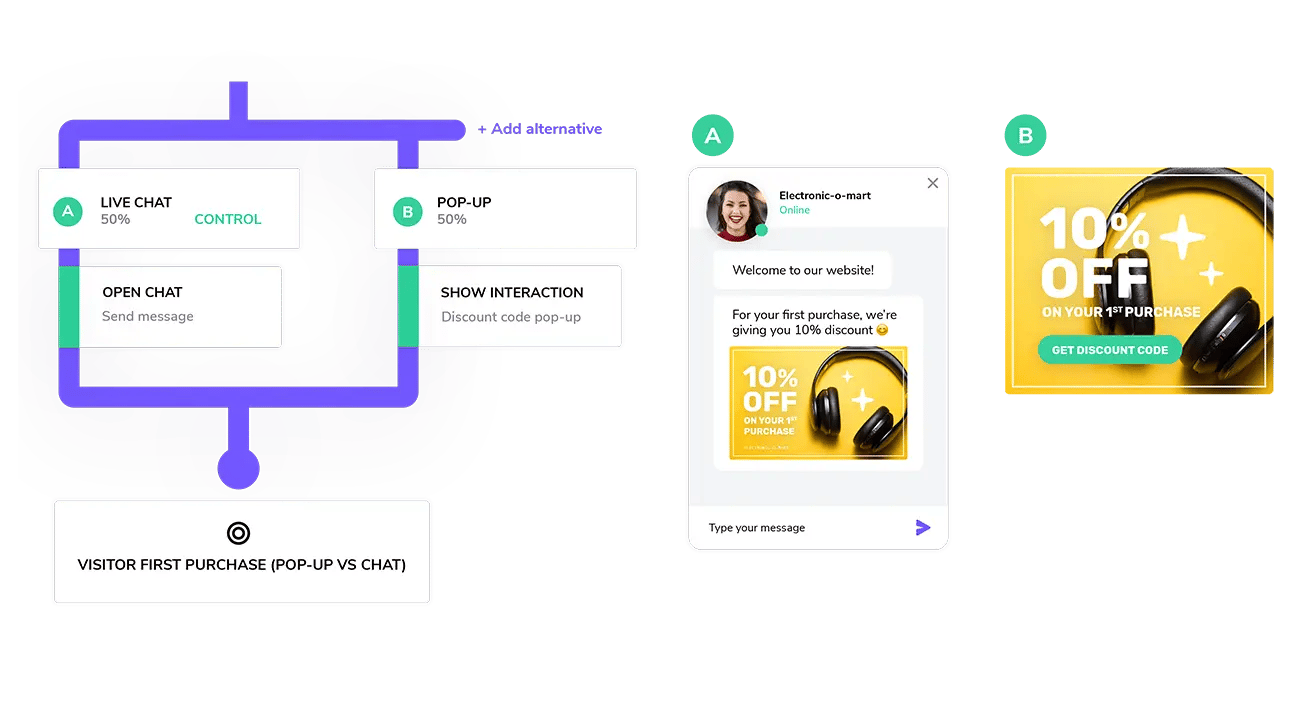

A/B testing, also known as split testing, involves displaying two different variations of your content to your online visitors. This means that 50% of viewers are shown variation A (known as, the control) and the other 50% are shown variation B (known as, the variation).

The performance of both versions is measured, then evaluated and used to optimise your final content. The aim is to determine, which variation performs better for the given conversion goal.

Today, it’s a common feature in most martech software and used in various channels, from email and e-newsletters to landing pages and homepages. Some tools will allow you to test more than one variant against the control variant.

How to A/B test

In general, A/B tests are often done by testing one specific variable at a time. Testing one element, such as an image or copy text can make it easier to draw conclusions.

You could test whether different copy text of a CTA button on a landing page has an effect on your conversion rate. A CTA with “Buy now” could potentially resonate better with your online visitors and convert more than “Go to purchase”.

The elements you choose to test will depend on the campaign at hand. Here are a few ideas of what you could test:

- Images

- CTA button (text, colour, position)

- Email subject line

- Headlines and Subheadings

- Ad copy

- Forms (location, form-fill elements)

The rule of thumb is to test one element at a time. However, some arguments have been made against testing single variables, which claim it could limit the improvement of design. This is known as getting stuck at the local maximum.

The hypothesis is that testing two radically different variations can actually result in a better design outcome. This technique is often applied in larger scale website design projects and split testing a specific element still seems to be the preferred method among marketers.

When experimenting, it’s important to keep in mind that traffic is imperative for conclusive results - you need a decent amount of online visitors to engage with your content or campaign.

Devoting time to A/B testing is also something to consider. Tests can last between a few days or up to a few weeks, again this depends on the amount of traffic you’re getting.

The benefits of A/B testing

A/B testing is an effective testing tactic if you want meaningful results quickly. Some key benefits are:

- Optimising conversion rates

- Understanding online visitor behaviour and customers better

- Driving better engagement

- Improving online sales

Optimising conversion rate is usually the main goal of A/B testing. While attending the Technology For Marketing Expo in London in 2019, this was a key topic among speakers.

One specifically interesting session by Workbooks and Communigator showcased how fewer fields on a landing page form can increase the amount of form fills. This isn't surprising but by performing an A/B test, they were able to verify that a short variation requiring the user to only fill in their email address converted 33% more form-fills than a form with multiple fields.

Another interesting example is from one of President Barack Obama’s fundraising campaigns, which raised an additional $60 million using A/B testing. By testing two different elements, media (image vs. video) and CTA button copy text, they were able to optimise their website and increase the sign-up rate by 40.6%.

So A/B testing isn’t a new tactic but clearly an effective one, helping you figure out what works best for your business. It’s time you stop guessing and start optimising! You can read more about our A/B testing tool here, which makes it possible to run AB tests for proactive automated live chat messages and interactive content on your website.

To learn more about how to optimise your website and succeed during peak sales seasons, check out our guide with 12 tips for your eCommerce strategy.

Editor's note: This blog was originally posted in 2019 but has been completely revamped and updated for accuracy.